How to use Technical Spikes to improve your team's Estimation accuracy?

Adjusting technical spikes for estimate accuracy

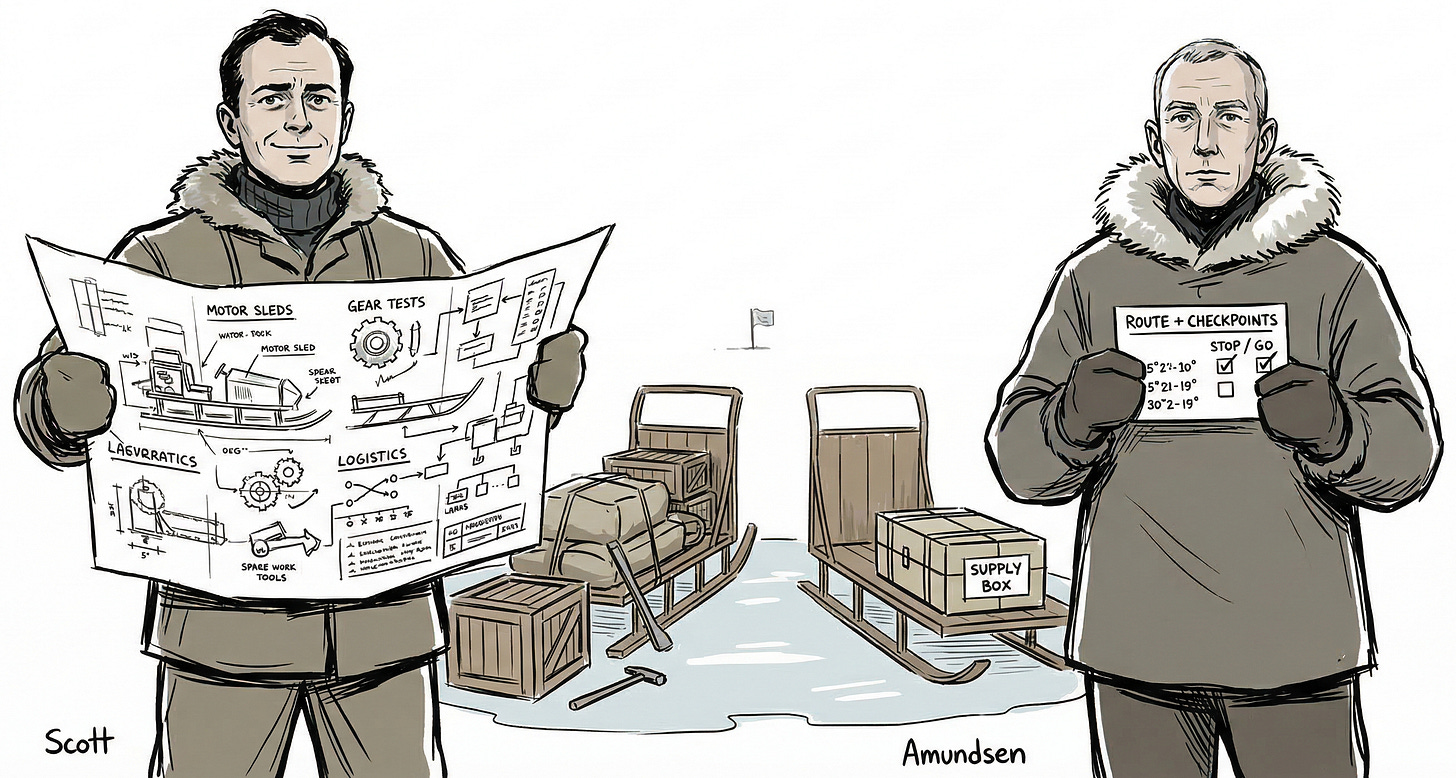

In 1911, two teams set out to reach the South Pole.

Robert Falcon Scott’s team did extensive preparation. They studied the terrain, tested their equipment, and built confidence that the journey was technically feasible. They knew they could make it.

Roald Amundsen’s team did something different. They picked a specific route. They pre-positioned supply depots at exact coordinates and decided, before they left, what they would NOT bring with them, what they would NOT attempt, and what had to be true at each checkpoint for the expedition to continue.

Scott’s team proved the trip was possible. Amundsen’s team defined the trip they were actually going to take.

Scott’s team didn’t succeed.

Why?

What happened?

If you are new to Winning Strategy, check out the posts below:

How to deal with the one difficult team member in your scrum team?

Weekly Recipe: How to deal with the one difficult team member in your scrum team?

Weekly Recipe: Prioritize defects when everything Is critical

Got an urgent question?

Get a quick answer by joining the subscriber chat below.

The Spike for estimation problem is a Strategy problem

We all know about spikes?

If you haven't encountered it (lucky you, in some ways), here's the short version:

When a team faces a major technical unknown, they timebox a few days to build a quick prototype and determine whether the scary thing is actually scary.

If you want to explore the topic further, I have written a post on Spikes that you can check out at the link below:

It's a genuinely good idea.

It comes from the Extreme Programming tradition, it's battle-tested, and mature teams use it all the time.

Here's the thing, though. Spikes don’t always deliver something!

A team runs a spike. The spike succeeds, BUT the estimate doesn't change.

The prototype works.

But when the team sits down to estimate the actual time, the range is just as wide as before. The team is still unsure about the requested time commitment.

How is that possible?

It’s possible because most spikes (in general) solve for the Technical uncertainty. And that distinction between the uncertainty you think you have and the uncertainty you actually have turns out to be one of the most interesting (and underappreciated) dynamics in product development.

Let me show you what I mean.

Story of a mature team

A product team I worked with needed to ship enterprise SSO. Azure AD integration (to be precise) for a big B2B prospect.

Leadership wanted an answer to:

“Can we commit to delivering this in 6 weeks? Yes… or No?”

The team did what any experienced team would.

(1) They timeboxed a spike (3 days). (2) They picked the riskiest technical area (OIDC/SAML. (3) They aimed to “remove uncertainty.”

Which uncertainty? The technical one.

This was the output (what they delivered):

a prototype that successfully authenticated against Azure AD in a sandbox

a short document stating: “We can use OIDC; libraries exist; token validation is straightforward”

a diagram of the auth flow

The spike worked. But the estimates?

They had no estimates.

They still had NO answer for how much time this will actually take to be production-ready.

Stakeholders were confused: “But you proved it works, why can’t you estimate it now?”

What went wrong?

The spike answered one question:

Can we technically authenticate against Azure AD?

Yes. Definitely yes.

But that question was never driving the estimates.

The estimates depended on a certain kind of uncertainty. The kind that involved a long list of decisions and dependencies.

Things like:

Do we need just-in-time user provisioning or SCIM or manual invites?

How do Azure AD groups map to internal permissions?

How do we handle multiple enterprise tenants and domain discovery?

What does the security team require before anything goes live?

What happens with disabled accounts, renamed emails, and duplicate identities?

Can we roll out per customer behind a feature flag?

What does the support team need for the inevitable Monday morning "SSO is broken" fire drill?

None of these is a technical feasibility question. They're decision questions. Policy questions. Cross-team coordination and dependency questions.

Technical uncertainty is the easiest uncertainty to identify, so it is the uncertainty that gets most teams Spike. But estimation uncertainty is usually dominated by scope ambiguity, unknown decisions, and external dependencies.

A spike on Technical uncertainty does approximately nothing for the Estimation uncertainty.

Understand it like this:

Let’s say you're trying to estimate how long it'll take to drive from New York to Los Angeles.

The unknown in your mind is "can my car handle the Rocky Mountains?"

So you test the car on a steep hill. It handles it fine. Great.

Can you estimate your trip?

No!

Why?

The reason you can't estimate the trip is because you haven't yet decided whether you're stopping in Chicago, you don't know if the highway through Kansas is closed, and your friend who's supposed to meet you in Denver hasn't confirmed dates.

The car works. But the trip is still unplannable.

Why did the experienced team get this wrong?

This is the part I find genuinely fascinating, because each reason below corresponds to a different type of cognitive bias.

#1: Knowledge Without Decisions

The team above came back from the spike saying, “We can do OIDC. We could map groups later. We might need SCIM depending on customer requirements.”

Could. Might. Depending.

There's a concept in strategy called "real options," which is the idea that maintaining flexibility has value. And it does! In certain contexts.

But when you're trying to estimate something, optionality is the last thing you want.

Every open option multiplies the “solution space.”

The team were estimating a decision tree. And the expected value of a decision tree with seven unresolved branches is... wide.

#2: No Constraints

The team didn’t decide the Scope.

No one locked down statements like:

“Phase 1 will not support group-based roles”

“Phase 1 supports one tenant per customer”

“Phase 1 is invite-only; no SCIM until Phase 2”

Without constraints, every “maybe” stayed inside the estimate.

As a result, estimates remained vague because, for stakeholders, “done” still meant “everything enterprise customers might want.”

#3: Ignored Dependencies

In most teams, the most likely thing to blow up the timeline is WAITING.

Waiting for:

security review lead time

infra changes (redirect URIs, secret storage, cert rotation)

customer IT coordination (metadata, app registration, testing windows)

The spike the team performed didn’t secure any commitments or timelines from those dependencies.

As a result, the team still couldn’t forecast dates because the critical path was dependent on 3 different teams… Security, Infrastructure and IT.

How did they fix it later?

The team re-ran the spike.

They now had a clear goal in mind for the technical spike:

"By Friday, the spike will help us know exactly what we are shipping in Phase 1, what we are clearly not shipping in Phase 1, and what external conditions must be true for the timeline to hold."

Here’s what the Spike looked like:

Timebox: 2 days

Inputs from: Product + Delivery Lead + Security rep + one engineer

Decision to make by the end of the spike: What is “in scope” and what is “out of scope” for a 6-week timeframe (Phase 1)?

For example:

Scope:

OIDC only (no SAML in Phase 1)

No SCIM in Phase 1

Role mapping is manual (group sync deferred)

Per-customer feature flag rollout

"Done" = audit logging + v1 support runbook

Evidence required:

thin vertical slice in staging with auth, feature flag and logs

lightweight security sign-off via threat model checklist

written non-goals included in the epic

Phase 1 (6 weeks) backlog change required. Splitting the epic into 3 increments with separate estimates:

Increment 1 (2 weeks): SSO login behind flag, single tenant

Increment 2 (2 weeks): Admin config, audit logs, runbook

Increment 3 (2 weeks): Role mapping and SCIM (later)

Estimating this was straightforward.

🎯 Weekly Recipe

Stay tuned for Sunday’s Weekly Recipe, where I’ll share a step-by-step guide for running decision spikes, including templates and facilitation tips.

What did the team learn?

Let’s zoom out for a second

In strategy, there’s a distinction between learning and committing.

Both are valuable. But they serve different functions, and confusing them leads to a very specific kind of organizational frustration, the feeling that you’re doing all the right things and somehow not making progress.

A traditional spike is a learning tool. It says, "We don't know if this is technically feasible, so let's find out." It works great when technical feasibility is the binding constraint.

But on most mature teams, building the thing is rarely the constraint. The binding constraint in such teams is deciding what to build, coordinating external dependencies, and defining what "done" means in a way everyone agrees on.

So the team needs a technical spike that can help them make these decisions.

A Decision Spike.

A decision spike is a commitment tool. It says,

"We have enough information to decide. The decision will help us reshape the backlog."

So, how do you tell if your spike will actually help?

Here's a simple test.

Spikes increase estimate accuracy ONLY when they shrink the solution space. Which means it should produce at least one of the following:

A decision (we chose X, not Y)

Constraints/non-goals (we will not do Z in this release)

The smallest shippable slice (this is what ships first)

Dependency commitments (security/infra/customer IT: who, what, when)

A rewritten backlog (split stories/epics that reflect the new decision)

If your spike does not end with the above, then that's research. Research is valuable! But it won't result in an accurate estimate.

Next time your team raises an estimate spike

Even experienced teams can fall into the trap of running spikes that feel productive but fail to answer the required question.

Next time someone proposes a spike for estimates, ask this:

“What uncertainty will this spike remove, and how will that reduce the range of our estimate?”

If the answer is clear, run the spike.

If the answer is vague, i.e. “we’ll learn more about the problem space,” that’s fine, but set expectations accordingly. You probably won’t be able to provide estimates.

The bottom line: spikes help in estimates when they shrink the solution space, not when they confirm a solution exists. The best teams don’t just spike the technical risk. They spike the ambiguity. And those are almost never the same thing.

That’s it for today!

So…Why Scott’s Team Didn’t Succeed

Let’s close the loop on the story we started with.

Why didn’t Robert Falcon Scott’s team succeed in their race to the South Pole, despite knowing the journey was technically possible?

Because Scott’s team ran a Technical Spike, while Amundsen’s team ran a Decision Spike.

Scott’s preparation proved that the equipment worked and the terrain could be crossed. But just like the engineering team that proved OIDC authentication worked in a sandbox, Scott’s team gathered Knowledge Without Decisions.

Amundsen, on the other hand, shrank his solution space. He committed to one method of transport (dogs). He defined strict non-goals (leaving behind anything that wasn't absolutely essential for survival and speed). He secured his dependencies by laying depots at exact, mathematically calculated coordinates before setting out

In short, Scott confirmed the expedition could work. Amundsen defined exactly how it would work.

The result is what we all know.

Show your support

Every post on Winning Strategy takes a few days of research and 1 full day of writing. You can show your support with small gestures.

Liked this post? Make sure to 💙 click the like button.

Feedback or addition? Make sure to 💬 comment.

Know someone who would find this helpful? Make sure to recommend it.

I strongly advise that you download and use the Substack app. This will allow you to access our community chat, where you can get your questions answered and your doubts cleared promptly.

Further Reading

Connect With Me

Winning Strategy provides insights from my experiences at Twitter, Amazon, and my current role as an Executive Product Coach at one of North America’s largest banks.